The Rate of AI Diffusion

On the Distance Between Capability and Adoption

02 - The Canary Papers: Signals in the Age of AI (Download PDF)

On the afternoon of September 4, 1882, Thomas Edison stood in the office of J. Pierpont Morgan in lower Manhattan and gave the signal. His chief electrician closed the switch. Four hundred lamps came on across one square mile of New York's financial district. The New York Times, one of Edison's first customers, ran the story the following day under "Miscellaneous City News."

It is worth pausing on that detail. The birth of the electric age was not the lead story. It was miscellaneous.

The Pearl Street station served eighty-two customers with four hundred lamps on its first day. Within two years, that number had grown to just over five hundred, with more than ten thousand lamps in service. The system worked. It was reliable. Edison had proven that centralized electricity generation was commercially viable. The future had arrived.

And then the slow process of diffusion began, taking fifty years to reach most of the country.

By the early 1920s, roughly half of urban American homes had electricity. But rural America was a different story. In 1935, only about eleven percent of American farms had central station power. It would take until the mid-1950s before that number reached ninety percent.

But the light bulb was only the first-order effect of electricity. The deeper transformation came through the electric motor, which allowed each machine to have its own power source, freeing the factory floor from the constraints of centralized steam. Henry Ford's assembly line was impossible without it. Yet that reorganization took roughly twenty-five years to become standard practice. Factory owners had invested heavily in the old systems. Workers had skills organized around the old layout. The shift didn't happen because someone installed a new power source. It happened because someone was willing to rethink the building.

The light arrived quickly. The reorganization took decades. That gap between capability and adoption is the story of every transformative technology, and it is the true measure of diffusion. It is also the defining feature of the current AI transition.

Each generation of transformative technology has closed that gap faster than the last. The telephone took a century to saturate American households, the cellphone took a decade, and generative AI reached a hundred million users in two months. But speed of access is not speed of integration. People adopt tools quickly. Organizations reorganize slowly. Diffusion, in other words, happens in stages: first the technology becomes widely available, then individuals begin using it in real work, and only later do institutions redesign processes, functions, and offerings around it. And for the first time, we have data that lets us see that distance with unusual precision.

The Gap

In March 2026, researchers at Anthropic published a study that offers one of the clearest pictures yet of this distance. Maxim Massenkoff and Peter McCrory introduced a metric they call "observed exposure," which measures not what AI could theoretically do to a job, but what it is actually doing in professional settings. The distinction matters enormously.

Previous attempts to gauge AI's labour market impact have mostly focused on theoretical capability: looking at a task, assessing whether a large language model could plausibly speed it up, and then aggregating across occupations to estimate exposure. These approaches tend to generate alarming numbers. And they have a poor track record. The history of expert prediction about technology's labour market effects is, to put it gently, not encouraging. This matters because it is the core justification for why observed data, what people are actually doing with AI, deserves more weight than theoretical exposure estimates.

The Anthropic approach combines theoretical task-level exposure estimates with actual usage data drawn from millions of real Claude conversations, weighting automated and work-related uses more heavily than casual or augmentative ones. The result is a map of where AI is genuinely being used in professional work, not just where it could hypothetically be used.

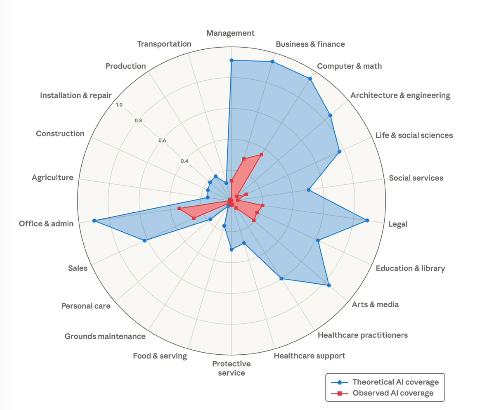

Theoretical capability and observed usage by occupational category(1)

This figure shows the share of job tasks that LLMs could theoretically perform (blue area) and Anthropic’s own job coverage measure derived from usage data (red area).

The findings are striking. In computer and math occupations, large language models are theoretically capable of handling ninety-four percent of tasks. In practice, actual observed coverage is just thirty-three percent. In office and administrative roles, theoretical capability covers ninety percent of tasks, but real-world usage covers a fraction of that. Computer programmers are the most exposed occupation, with seventy-five percent of their tasks already showing significant AI coverage. Customer service representatives follow at seventy percent. Data entry keyers at sixty-seven percent. At the other end, thirty percent of workers have zero observed AI coverage: cooks, mechanics, bartenders, lifeguards, dishwashers.

The capability exists. The usage lags. And what the gap really measures becomes clearer when you look at adoption not as a single thing but as a set of layers.

The Layers of Adoption

Adoption is not a binary state. It operates across layers, each more difficult and more valuable than the last. Most organizations are stuck at the first.

Layer One: Task-Level Adoption. This is where most organizations begin and where many remain. An individual uses AI for a specific activity: drafting an email, summarizing a document, writing code. The work gets done faster, but the workflow doesn't change. The org chart doesn't change. The headcount doesn't change. This is the equivalent of putting an electric light in a gas-lit factory. It's better, but it's not transformative.

Layer Two: Process-Level Adoption. This means redesigning a workflow end-to-end so that AI is embedded in the sequence of steps, not bolted onto one of them. A legal team at a mid-size firm offers one example. Instead of using AI merely to draft contracts, the team rebuilds its entire contract review pipeline: AI handles first-pass analysis, flags non-standard clauses, and routes exceptions to the appropriate specialist. Human lawyers handle judgment calls, risk assessment, and client-specific considerations, while AI handles pattern recognition and procedural triage. The cycle time drops from days to hours. But achieving this required coordination across roles, new quality assurance standards, new metrics, and often changes to the software stack itself. This is the equivalent of putting an electric motor on a machine. The power source is better, but the factory floor hasn't been redesigned.

Layer Three: Function-Level Adoption. This is where competitive advantage begins to compound. It means rethinking an entire business function around what becomes possible when cognition is abundant rather than scarce. The function doesn't just do the same work faster. It does different work. A research organization doesn't just accelerate literature review; it can explore hypothesis spaces that no human team could cover. The structure of the function itself changes: fewer generalists, more specialists working in concert with AI systems, different reporting lines, different success metrics. This is the redesigned factory floor.

Layer Four: Product and Service-Level Adoption. The organization doesn't just use AI internally; it builds AI into what it sells. An insurer, for example, might move beyond using AI to process claims faster and launch a new kind of policy that continuously ingests operating data, flags emerging risks, recommends interventions, and changes the customer relationship from episodic reimbursement to ongoing protection. At that point, AI is no longer a productivity tool behind the curtain. It is part of the value proposition itself. This is the layer at which AI-native companies operate from day one, and it is the layer that most established organizations have not yet reached.

The Anthropic data, read through this framework, tells a clear story. Observed AI usage is almost entirely Layer One activity. The deeper layers, where competitive advantage actually compounds, remain thin across most of the economy. McKinsey's 2025 State of AI survey confirms this from a different angle. Eighty-eight percent of organizations now report using AI in at least one business function. But nearly two-thirds remain in the experimentation or piloting phase. Only about one-third have begun to scale. And just seven percent have fully scaled. Access is widespread; integration is rare.

The S-Curve Is Getting Steeper

If the gap between capability and usage is this wide, a natural question follows: how quickly is it closing?

Generative AI has moved faster than almost anything in the historical record. ChatGPT reached one hundred million users within two months of its November 2022 launch. Generative AI reached thirty-nine percent adoption in just two years, a milestone that took the internet five years and personal computing nearly twelve. Analysis by Epoch AI of technology diffusion patterns shows that the time to reach seventy percent household adoption has been declining steadily, from around forty years for technologies introduced in 1900 to around seventeen years for those introduced in 2000. AI appears to be tracking ahead of even that accelerating trend. As explored in the first paper in this series, 'A Century in a Decade,' there is a credible case that the next decade may compress a century's worth of transformation. But the data here suggests the compression applies unevenly: the technology moves at that pace, while the organizations absorbing it do not.

But these numbers measure access adoption, Layer One in the framework above. They do not tell us how quickly organizations move from Layer One to Layers Two, Three, and Four. The infrastructure for AI is largely in place. The internet exists. Cloud computing exists. The models are accessible through a browser. What remains is not a hardware problem but an organizational one. Organizational change has its own physics, governed less by engineering timelines than by human psychology, institutional incentive structures, and the sheer inertia of established ways of working. The S-curve of access may be nearly vertical. The S-curve of integration is flatter. And the distance between them is where competitive advantage is won or lost.

Why the Gap Persists

A startup can adopt a new AI tool in an afternoon. Someone finds it, tests it, shares it with the team, and by Friday it's part of how the company works. An enterprise operates under a different gravity, best understood through what might be called the organizational antibody effect. Large organizations have developed, over decades, sophisticated immune systems designed to protect against bad decisions. Legal reviews new vendors for liability exposure. IT evaluates security posture. Finance questions ROI assumptions. HR assesses workforce impact. Each of these functions is acting rationally within its own mandate. But the cumulative effect of five reasonable objections, each requiring its own review cycle, is that the organization moves at the speed of its slowest approval, while the technology moves at the speed of its fastest release cycle.

The specific concerns are legitimate: data residency, intellectual property exposure, cybersecurity, compliance architectures that predate AI entirely, legacy system integration, and change management at scale. Some of the gap, in other words, is not inertia but judgment: a model that can plausibly assist with a task is not always reliable enough to be trusted with it at production depth. None of this is unreasonable. But a startup that adopted AI eighteen months ago has already compounded its advantage through two product cycles. An enterprise that is still in procurement review for the same capability is not being careful. It is falling behind carefully.

What to Measure

Revenue per employee is becoming a critical differentiator. PwC's 2025 Global AI Jobs Barometer found that industries most exposed to AI are seeing three times higher growth in revenue per employee compared to the least exposed industries. Since generative AI's proliferation in 2022, productivity growth nearly quadrupled in AI-exposed industries like financial services and software publishing, rising from seven percent between 2018 and 2022 to twenty-seven percent between 2018 and 2024. Industries least exposed to AI saw their productivity growth hold flat or decline slightly. To isolate these productivity gains from simple pricing changes, organizations must also track gross margin per employee. If revenue rises while margins stay flat, the gains are largely from market conditions; if both increase, the organization has structurally changed how work gets done.

The scaling ratio, the proportion of AI initiatives that have moved beyond pilot into production, is emerging as the most honest internal diagnostic. McKinsey's data suggests that only about six percent of organizations qualify as "AI high performers." These high performers share a common trait: they are nearly three times more likely to have redesigned workflows around AI rather than bolted AI onto existing processes.

The skill premium is another leading indicator. PwC found that workers with AI skills now command a fifty-six percent wage premium over comparable workers without those skills, up from twenty-five percent the year before. The skills sought by employers are changing sixty-six percent faster in AI-exposed occupations. When the skills an industry demands are changing that quickly, the organizations that are slow to reskill are building a compounding disadvantage, not just in productivity, but in their ability to attract the talent that will drive the next wave of adoption.

The Front Door Is Narrowing

The Anthropic researchers found that while there is no systematic increase in unemployment among highly AI-exposed workers yet, hiring of younger workers aged twenty-two to twenty-five has slowed by roughly fourteen percent in exposed occupations since ChatGPT launched. The front door is narrowing before the building shakes.

The labour market effects of AI are not arriving as mass layoffs, the scenario that dominates public anxiety. They are arriving as a quiet contraction of entry points. The jobs that traditionally served as on-ramps for young professionals, research assistance, junior copywriting, basic data analysis, first-level customer support, are precisely the tasks where AI adoption is most advanced. The structure is still standing, but the doorways are getting smaller.

The Defining Feature

The rate of diffusion of technology is accelerating. AI is no exception. By any measure of access adoption, it is moving faster than electricity, faster than the telephone, faster than the internet, faster than the smartphone.

But integration adoption, the kind that actually reshapes economies and organizations, has always been slower. It requires not just better tools but better leadership: the willingness to redesign, the capacity to manage change, the patience to rebuild while the old system is still running.

The gap between what AI can do and what organizations are actually doing with it is the defining feature of this moment. The Anthropic data makes it visible. The PwC data makes it measurable. The McKinsey data makes it organizational. And the adoption layers make it actionable: any leader can locate where their organization sits and see the distance that remains.

Edison's customers in 1882 were early, but being early with a light bulb did not guarantee being early with a redesigned factory. The organizations that captured the full value of electricity were not the first to install lights. They were the first to rethink the building.

The same will be true of AI. The rate of diffusion has changed. The question is whether the rate of rethinking can change with it.

A Counterargument

The Case for the Gap

The distance between what AI can theoretically do and what organizations are actually using it for is frequently described as a problem. This is presented as a measure of institutional sluggishness, a competitive risk, a failure to keep pace. But there is another way to read that distance. It may be a measure of the technology's actual limitations, accurately assessed by the people closest to the work.

The studies that estimate AI's theoretical capability tend to define it broadly: could a large language model plausibly speed up this task? But plausibility is doing a lot of work in that question. A model that can draft a contract is not the same as a model that can draft a contract a lawyer would send to a client without substantial revision. A model that can write code is not the same as a model that can write code that works reliably in a production environment with complex dependencies. When professionals look at AI and choose not to use it for tasks it could theoretically perform, they may not be exhibiting resistance to change. They may be exercising judgment.

It has become common to frame the adoption gap as a set of frictions, procurement delays, legacy system integration, regulatory burden, change management overhead. These are real, but they are solvable with better processes and sufficient will. The deeper issue is different in kind. A model that hallucinates, that loses coherence across complex multi-step workflows, that produces confident-sounding analysis built on misunderstood premises. These are capability problems, not adoption problems. Conflating the two leads to a prescription that organizations should be moving faster. Some of them should be moving more carefully.

The instinct to taxonomize adoption into layers, from individual task use through process redesign to full organizational transformation, is useful as description. But it carries an implicit normative weight: that deeper is better, that organizations at the first layer are falling behind those at the third. This assumes that deeper integration is always the right target. For many organizations, task-level adoption may be the appropriate steady state, not a way station. A law firm that uses AI to draft first passes but keeps human lawyers in control of every substantive decision is not failing to adopt. It is adopting correctly for its domain, given the current reliability of the technology and the consequences of getting things wrong.

The electricity analogy that often accompanies these arguments reinforces the bias toward speed. Factories that were slow to reorganize around electric motors are cast as cautionary tales. But the factories that reorganized early were not uniformly rewarded. Many early adopters faced costly failures, production disruptions, worker resistance, equipment incompatibilities that weren't apparent until scale. The survivors wrote the history. The failures are forgotten. Survivorship bias in technology narratives tends to make speed look wiser than it was.

There is also a structural difference between AI and the technologies it is most often compared to. Electricity and the telephone were general-purpose but stable, once installed, they worked the same way year after year. The investment case for deep integration was straightforward because the underlying capability wasn't going to shift beneath you. AI models are improving rapidly and unpredictably, which means that an organization that integrates deeply around today's capabilities may find itself locked into architectures that tomorrow's models make obsolete. There is a reasonable case for strategic patience: adopt at the task level, build organizational fluency, develop evaluation frameworks, and wait for the technology to stabilize before committing to the deeper structural changes that are difficult and expensive to reverse.

The gap between capability and adoption is real. But the assumption that it represents latent value waiting to be captured deserves scrutiny. It may equally represent risk waiting to be incurred. The organizations that history remembers as wisely cautious and those it remembers as fatally slow look identical in the early years. The difference only becomes clear later, which is precisely why the decision is hard, and why the case for speed is less obvious than it appears.

The Canary Papers

The Canary Papers is a six-essay series drawn from executive conversations on AI adoption and strategic change. Named after the early detection systems once used in coal mines, the series focuses on signals rather than headlines, where capabilities are compounding, where organizations are lagging, and where competitive gaps are quietly widening. Each paper examines a distinct pressure point, from displacement timelines to diffusion barriers and trust costs. The aim is not prediction, but disciplined clarity: to help leaders recognize structural shifts early enough to act with intention rather than react under pressure.